README Documentation

Cognee - Build AI memory with a Knowledge Engine that learns

Demo . Docs . Learn More · Join Discord · Join r/AIMemory . Community Plugins & Add-ons

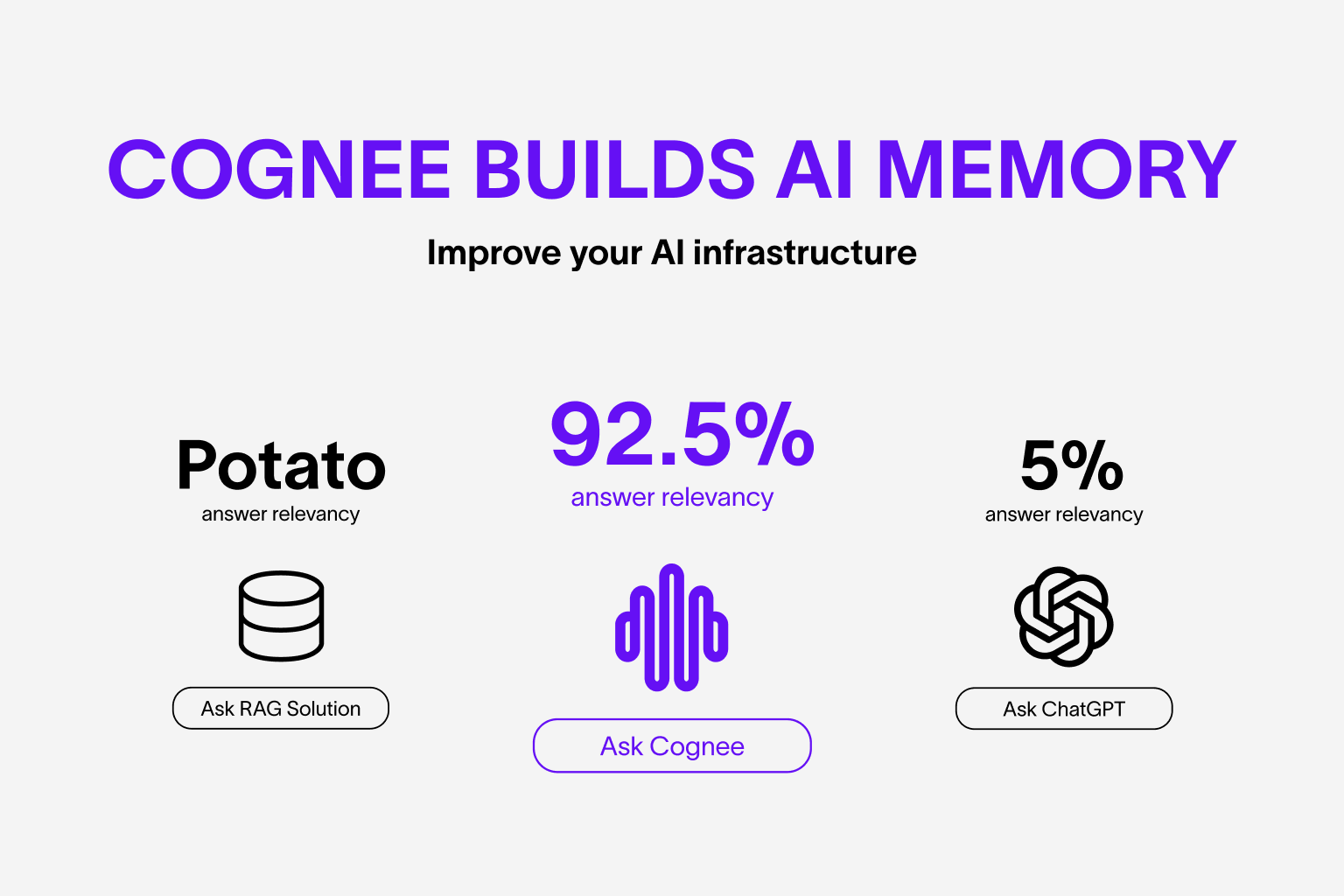

Use our knowledge engine to build personalized and dynamic memory for AI Agents.

🌐 Available Languages : Deutsch | Español | Français | 日本語 | 한국어 | Português | Русский | 中文

About Cognee

Cognee is an open-source knowledge engine that lets you ingest data in any format or structure and continuously learns to provide the right context for AI agents. It combines vector search, graph databases and cognitive science approaches to make your documents both searchable by meaning and connected by relationships as they change and evolve.

:star: Help us reach more developers and grow the cognee community. Star this repo!

Why use Cognee:

- Knowledge infrastructure — unified ingestion, graph/vector search, runs locally, ontology grounding, multimodal

- Persistent and Learning Agents - learn from feedback, context management, cross-agent knowledge sharing

- Reliable and Trustworthy Agents - agentic user/tenant isolation, traceability, OTEL collector, audit traits

Basic Usage & Feature Guide

To learn more, check out this short, end-to-end Colab walkthrough of Cognee's core features.

Quickstart

Let’s try Cognee in just a few lines of code. For detailed setup and configuration, see the Cognee Docs.

Prerequisites

- Python 3.10 to 3.13

Step 1: Install Cognee

You can install Cognee with pip, poetry, uv, or your preferred Python package manager.

uv pip install cognee

Step 2: Configure the LLM

import os

os.environ["LLM_API_KEY"] = "YOUR OPENAI_API_KEY"

Alternatively, create a .env file using our template.

To integrate other LLM providers, see our LLM Provider Documentation.

Step 3: Run the Pipeline

Cognee will take your documents, load them into the knowledge angine and search combined vector/graph relationships.

Now, run a minimal pipeline:

import cognee

import asyncio

from pprint import pprint

async def main():

# Add text to cognee

await cognee.add("Cognee turns documents into AI memory.")

# Add to knowledge engine

await cognee.cognify()

# Query the knowledge graph

results = await cognee.search("What does Cognee do?")

# Display the results

for result in results:

pprint(result)

if __name__ == '__main__':

asyncio.run(main())

As you can see, the output is generated from the document we previously stored in Cognee:

Cognee turns documents into AI memory.

Use the Cognee CLI

As an alternative, you can get started with these essential commands:

cognee-cli add "Cognee turns documents into AI memory."

cognee-cli cognify

cognee-cli search "What does Cognee do?"

cognee-cli delete --all

To open the local UI, run:

cognee-cli -ui

Demos & Examples

See Cognee in action:

Persistent Agent Memory

Community & Support

Contributing

We welcome contributions from the community! Your input helps make Cognee better for everyone. See CONTRIBUTING.md to get started.

Code of Conduct

We're committed to fostering an inclusive and respectful community. Read our Code of Conduct for guidelines.

Research & Citation

We recently published a research paper on optimizing knowledge graphs for LLM reasoning:

@misc{markovic2025optimizinginterfaceknowledgegraphs,

title={Optimizing the Interface Between Knowledge Graphs and LLMs for Complex Reasoning},

author={Vasilije Markovic and Lazar Obradovic and Laszlo Hajdu and Jovan Pavlovic},

year={2025},

eprint={2505.24478},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2505.24478},

}